The AI hype was everywhere.

Everywhere you looked, someone was calling it revolutionary, mindblowing, or the future of business. Influencers were selling dreamy lifestyles.

Mainstream media too was repeating it without their usual skepticism.

Software companies were wrapping old promises in new AI packaging and calling it transformation.

And if you have been in business, it was honestly hard not to get pulled in the giant wave.

You were being told that AI could help you do more with less. Fewer people, faster work, lower costs, cleaner operations. Suddenly the dream was not just better software. It was a leaner business with fewer headaches.

That all sounded great on paper.

A lot of businesses bought into that vision. Many started using AI for support, content, research, internal tasks, and day-to-day workflows. Some went further and started rebuilding the business around it.

That is where things started getting messy.

Because once you start making real business decisions around AI, this stops being about productivity hacks. Now it is about dependence.

Now it is about whether the returns are actually there, whether the tool can hold up, and whether your business can still function properly if the hype fades faster than the product improve - and if it doesn’t, are there any refunds? (Not really, no)

And that’s exactly what happened with Walmart and OpenAI’s Instant Checkout.

The feature launched with the usual excitement, but the results were underwhelming enough that Walmart later said direct in-chat checkout converted far worse than sending shoppers back to Walmart’s own site, while the model itself got reworked.

When these overhyped AI products stumble, the people who sold the dream usually move on to the next shiny thing.

Real businesses do not get that luxury. (Quote)

They are the ones left explaining the weak numbers, fixing broken workflows, and dealing with the cost of trusting the hype too early.

One of the most talked about AI video products in the market, is being shut down as it’s not ‘profitable enough’.

It would have sounded ridiculous a few months ago. A product this visible, this hyped, and this aggressively pushed as part of the AI future was not supposed to feel temporary.

Sora had already become one of the most talked-about AI video tools in the market when it was first launched.

But that is exactly the point - something can be all over headlines, strategy decks, and creative conversations and still be far less stable than businesses assume.

Immediately after the Sora announcement, reports came that Disney was caught off guard and decided to end its $1 billion partnership with OpenAI.

A user on reddit commented on the news as to why it happened, “They lost SO much money every time someone used this, and it had almost no revenue potential. This was pretty well publicized.”

Many artists today celebrated Sora’s demise as a win for creativity, ingenuity and human originality.

Like Open AI, Google too has been cracking down on AI but not in the neat little “AI content bad, human content good” way people keep repeating on LinkedIn.

According to Google’s recent algorithm updates - it's clear that Google favors content following their EEAT guidelines. Its Spam update, which came exactly 2 years ago, specifically targeted scaled content abuse, including websites that used AI to publish content at scale with little human oversight or value added.

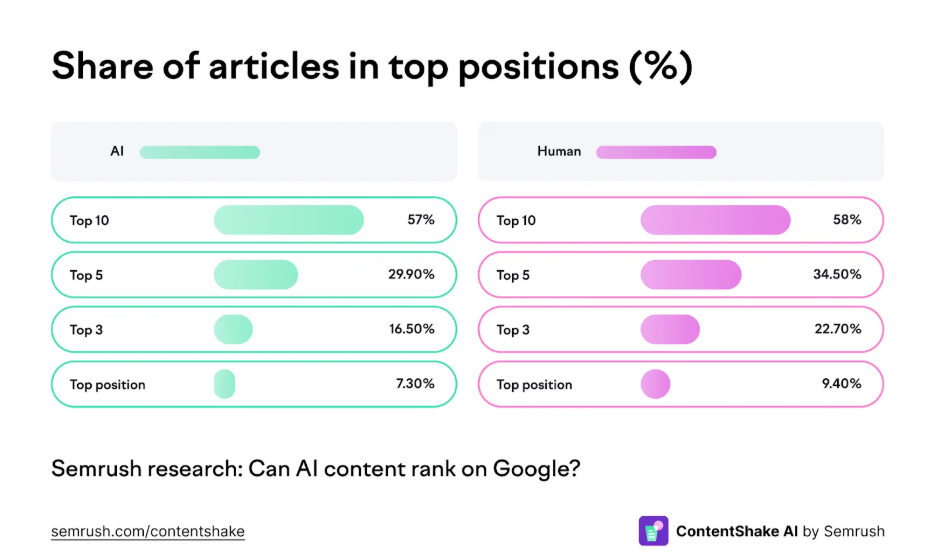

As per a study done by SEMrush (after analyzing 20k articles) there is a gap in rankings between AI and human written content, but it’s not as vast as you may think.

However, Google is actively punishing websites who are exploiting AI to interfere with the search rankings.

“Our blog traffic has been consistently going down since April. From 160K in the beginning of the year, we are at 37k as of today.” - a website owner venting out on reddit, “These are the things we’ve done so far to reverse this - Unpublished AI generated content”.

But that’s not how it works - once you get shadow banned or penalized by Google, it’s an uphill battle from there.

The AI feature can disappear, the rankings can drop, the shiny new checkout flow can underperform, but your responsibilities do not go anywhere.

Customers still expect a response, employees still need clarity and orders still need attention.

And if the business supports your family too, this stops feeling like a tech story very quickly. It starts feeling personal.

Now you are left doing the kind of work nobody mentions during the hype cycle.

That is what makes overdependence expensive. The problem is not just that the AI tool failed to make life easier. It is that when it does, your business is the one stuck cleaning up after it.

You were told AI would help you run leaner, move faster, and need fewer people. A lot of businesses believed that.

The World Economic Forum found that about 40% of employers expected to reduce headcount where AI could automate tasks, and Orgvue’s research found 39% of business leaders had already made employees redundant because of AI. More awkwardly, 55% of those leaders later admitted they got some of those decisions wrong.

As a business owner you cut people because the new tool looked good enough.

You trimmed writers, support staff, researchers, or ops help because the software demo looked smoother than real life usually is.

Then the team spends months worrying, adjusting, overchecking, and trying to make the new setup work while pretending everything is under control.

And if it does not work, nobody hands you those lost hours back.

So, after all the excitement, you could end up right back where you started;

Only now you are doing it with less certainty, more disruption, and no real guarantee the next “must-adopt” AI tool will not pull the same trick.

It is a brilliant marketing line. Clean, catchy, a little threatening, and perfect for getting people to open a new tab and start “learning AI” before lunch.

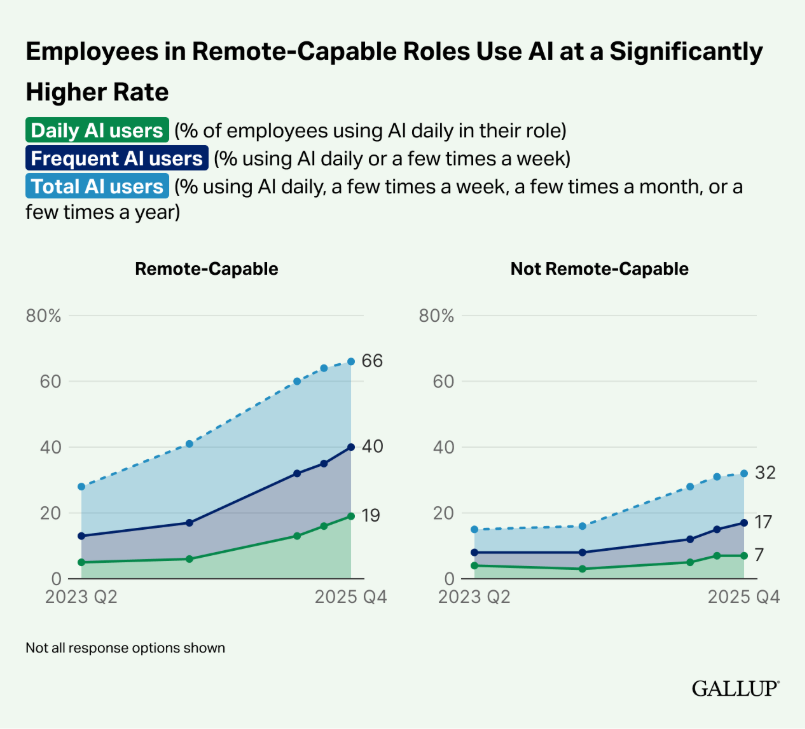

And it worked, Gallup says AI use in remote-capable jobs in the U.S. reached 66% by 2025, with 40% using it frequently.

So yes, the slogan did its job. It got a lot of people, and a lot of businesses, to start treating AI like the new minimum requirement for staying relevant.

The hype machine kept feeding it too. Every few days, someone important says we are basically standing at the gates of AGI.

Just this week, Nvidia CEO Jensen Huang said, “I think we’ve achieved AGI,” and then partly walked it back.

Which is honestly very on-brand for this whole era - make the biggest possible claim first, sort out the fine print later.

You can see that pattern getting repeated over and over- Grand claim. Quiet caveat. Repeat.

Let's be precise about this, because the moment you question the hype, someone accuses you of being a Luddite. So let me be clear.

AI is useful. In specific, bounded ways, it is genuinely impressive. No one can argue against that.

It can accelerate research- the kind of work that used to take an afternoon of tab-hopping now takes twenty minutes. It can produce workable first drafts that get you past the terror of the blank page. It can sort through databases, summarise dense reports, and surface patterns across thousands of documents faster than any human team.

For developers, it can generate boilerplate code, prototype ideas, and build quick proofs of concept. For marketers, it can brainstorm angles you hadn't considered. For customer teams, it can handle the tier-one queries that were soul-destroying for humans anyway.

But they are not the revolution that was promised.

Harvard Business Review published a study in September 2025 on what they called "workslop"- AI-generated content that looks polished but lacks real substance, effectively offloading cognitive labour onto the person who has to read, verify, and fix it. For a large organisation, the researchers estimated this adds up to more than $9 million a year in lost productivity. Over half of respondents said workslop made them feel annoyed. And yes, nearly a quarter felt offended.

First: breathe.

Not because there's nothing to do, but because - unlike the AI companies burning through billions in quarterly losses - your business doesn't have that kind of money. You don't need to move at their pace. You need to move at yours.

Here's what that looks like:

Don't try to fix what isn't broken. If your team has a workflow that produces good results on time, the burden of proof is on the AI tool to improve it - not on you to justify why you haven't adopted it yet. Adoption without reliable improvement is just expense.

Raise your scepticism. Not to the point of cynicism, but to the point of useful interrogation. When someone tells you AI will transform your business, ask them: which process, by how much, measured how? If they can't answer, they're selling you a feeling, not a solution. And yes, always ask them- what percentage of their own customers are coming back to buy from them month after month? And how many just try the fancy new tool and give up.

Follow researchers, not influencers. The Harvard Business Review, the San Francisco Fed, MIT, Gallup, Pew Research - these organisations are doing the slow, unglamorous work of measuring what's actually happening. They won't give you a dopamine hit on your morning scroll. But they'll save you from expensive mistakes. If that feels too much, follow industry voices you have always respected- people you know will never sell their soul to the latest hype, but centred around the common concerns of businesses like yours.

Remember that we still live in a human world. Your customers are human. Your employees are human. The trust that holds your business together is human. No amount of automation changes the fact that the most valuable things in commerce - judgment, taste, relationships, reputation - are not things a language model can generate.

AI companies would very much like you to forget this. It's rather inconvenient for their valuations.

Don't forget it.